|

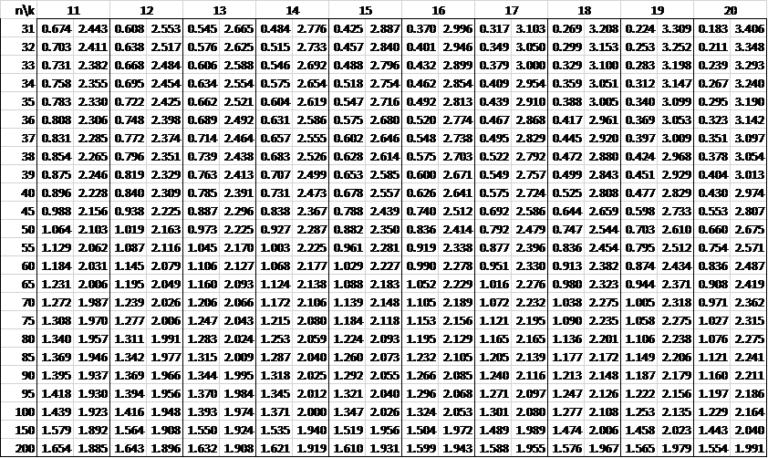

Alternatively, a significant p-value for the DW statistic would suggest rejecting the null hypothesis and concluding that there is first order autocorrelation in the residuals, and a non-significant p-value would suggest accepting the null hypothesis and concluding that there is no evidence of first order autocorrelation in the residuals. A DW statistic of greater than 2.5 indicates negative first order autocorrelation. A DW statistic below 1.5 indicates positive first order autocorrelation. If ρ DU then there is no positive autocorrelation.Ĭ) If DL (4.0 ? DL) then there is negative autocorrelation.Ĭ) If (4.0 ? DU) < DW < (4.0 ? DL) then the test is inconclusive.Ī rule of thumb that is sometimes used is to conclude that there is no first order temporal autocorrelation if the DW statistic is between 1.5 and 2.5. Temporal datasets are usually characterized by positive autocorrelation. When disturbances in period t − 1 are negative disturbances, then disturbances in period t tend to be negative. In this case, positive autocorrelation exists which means that when disturbances in period t − 1 are positive disturbances, then disturbances in period t tend to be positive. If ρ > 0, then the disturbances in period t are positively correlated with the disturbances in period t − 1. The parameter ρ can take any value between negative one and positive one. Ε t − 1: the disturbance in time period t − 1, The AR (1) autocorrelation assumes that the disturbance in time period t (current period) depends upon the disturbance in time period t − 1 (previous period) plus some additional amount, which is an error, and can be modeled as : The form of temporal autocorrelation that is encountered most often is called the first order temporal autocorrelation in the first autoregressive term, which is denoted by AR (1). There are different structure types of temporal autocorrelation: 1 st order, 2 nd order, and so on. For instance, if a dataset influenced by quarterly seasonal factors, then a resulting model that ignores the seasonal factors will have correlated error terms with a lag of four periods. This phenomenon is called positive first-order autocorrelation, which is the most common manner in which the assumption of independence of errors is violated. a positive error), then it is reasonable to expect that the effects of those same factors linger creating an upward (positive) bias in the error term of a subsequent period. If there are factors responsible for inflating the observation at some point in time to an extent larger than expected (i.e. error term) in year t to be correlated with the disturbance in year t − 1 and with the disturbance in year t + 1, t + 2, and so on. For example, we might expect the disturbance (i.e. In this context, the error in a first time period influences the error in a subsequent time period (either the previous period, or the next period or beyond). heterogeneity due to time) that can affect the observations drawn across the time, such as time series data, panel data in the form of serial correlation, and any other dataset that might be collected over a period of time. The violation of this assumption occurs because of some temporal (time) component (i.e. The presence of correlated error terms means that these types of inferences cannot be made reliably. If this assumption is violated, the standard errors of the estimates of the regression parameters are significantly underestimated which leads to erroneously inflated coefficients values, and incorrect confidence intervals. Which means that the expected value of all pair-wise products of error terms is zero, and when the error terms are uncorrelated, the positive products will cancel those that are negative leaving an expected value of 0.0. : error terms of the i and j observations respectively,

This assumption is formally expressed as:Į: the expected value of all pair-wise products of error terms, Most regression methods that are used in crash modeling assume that the error terms are independent from one another, and they are uncorrelated. Temporal autocorrelation is closely related to the correlation coefficient between two or more varia- bles, except that in this case we do not deal with variables X and Y, but with lagged values of the same variable.

serial correlation) is a special case of correlation, and refers not to the relationship between two or more variables, but to the relationship between successive values of the same variable.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed